Hook: Did you know that a single data center powering artificial intelligence can use as much energy as an entire small town? As AI becomes woven into everything from voice assistants to medical technology, power consumption AI is quietly straining our global power grids like never before.

Uncovering the True Cost: The Hidden Energy Drain of Power Consumption AI

The world is quickly waking up to the enormous energy appetite of artificial intelligence. The rewards are clear: AI models now outperform humans in complex pattern recognition, language translation, and even creative writing. But as their capabilities grow, so does their demand for power. Data centers—the industrial engines making AI possible—consume more energy each year, often drawing from electricity grids powered by fossil fuel. As of the latest reports, global data center energy consumption is projected to grow another 50% by 2027, ramping up both the operational costs for businesses and the environmental impact for us all.

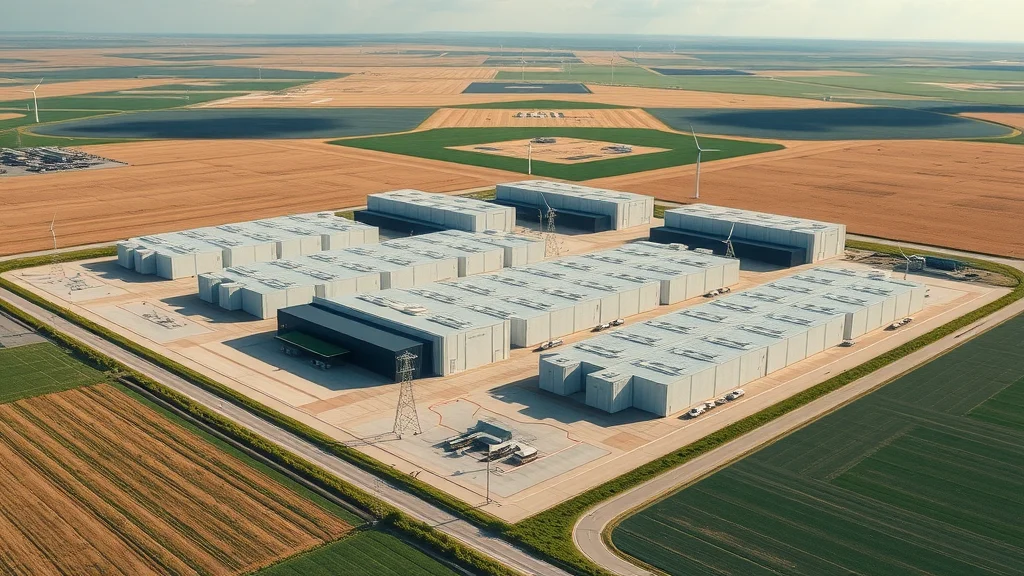

The truth is, the real cost of power consumption AI is often invisible. Unlike the devices we see and touch, the servers that run AI models may be half a world away, humming quietly in sprawling data centers. Behind their glowing racks and advanced cooling systems lies an intricate web of electricity consumption, water usage, and carbon emissions that impacts our planet at scale. Understanding this hidden power demand is essential—not just for technologists and business leaders, but for every citizen invested in a sustainable future.

“AI’s triumph in solving complex problems comes at a steep cost — its invisible appetite for electricity.”

What You'll Learn About Power Consumption AI and Energy Consumption

How data centers powering artificial intelligence contribute to global energy demand

The role of large language models in surging electricity consumption

Environmental impact of power consumption AI

Current solutions and innovations aimed at mitigating AI energy usage

The Scale of Power Consumption AI in Modern Data Centers

Data Center Energy Consumption in the Age of Artificial Intelligence

Data centers have always been significant consumers of energy, but the arrival of advanced AI workloads has amplified their electricity demand. In the past, servers mainly handled web hosting and simple data storage. Today’s AI data centers are working around the clock, running complex AI models that harness millions—even billions—of calculations per second. According to the International Energy Agency, global data centers accounted for nearly 1% of global electricity usage in 2022, with industry experts warning this could double by 2030 as AI adoption soars.

For context, a single large AI model can use more energy during its initial model training phase than several hundred average U. S. homes use in an entire year. The energy demand from data centers is not just about powering servers: air conditioning, cooling systems, lighting, and backup power supplies all factor in. With the growth of generative AI—systems that require huge computation for both training and real-time inference—the cumulative energy consumption is stacking up quickly.

Comparison of Energy Usage: Pre-AI vs. AI-Driven Data Centers |

||

Characteristic |

Pre-AI Data Centers |

AI-Driven Data Centers |

|---|---|---|

Typical Power Draw |

5–15 MW |

20–100+ MW |

Main Workloads |

Web hosting, simple storage |

Large AI model training, inferencing |

Cooling Needs |

Air-based (standard) |

Liquid cooling & advanced systems |

Impact on Grid |

Moderate, local |

Severe, affecting regional grids |

Energy Source Transition |

Fossil fuel–dominated |

Increasing green energy integration |

Electricity Consumption Trends Among AI Models

As AI models evolve, their appetite for power explodes. Earlier machine learning systems could be trained efficiently on just a few servers. Now, large language models like GPT-4 bring together clusters of thousands of processing units—GPUs and TPUs—that draw immense levels of electricity. The energy usage of these models doesn’t just stem from their size; it’s also about their complexity. Every advancement in model performance adds what experts call “parameter bloat,” multiplying the dataset, computational cycles, and ultimately, the kilowatt-hours needed.

According to recent studies, training one state-of-the-art AI model can lead to electricity consumption rivaling the yearly power usage of small cities. And that’s just for training—continued use (called “inference”) further drives up energy demand. The acceleration in electricity demand from data centers has forced the industry and governments to rethink power sourcing and infrastructure planning like never before.

For a deeper look at how political and regulatory actions can influence the energy landscape for AI and renewables, you may find it insightful to explore the impacts of policy decisions on offshore wind jobs and clean energy initiatives. Understanding these dynamics can shed light on the broader challenges of integrating sustainable power sources for high-demand sectors like artificial intelligence.

Environmental Impact and the Role of Power Consumption AI

The widespread adoption of power consumption AI brings with it a mounting environmental impact. As data centers guzzle down more and more power, reliance on fossil fuel–based energy sources can lead to substantial increases in greenhouse gas emissions. If current trends continue, AI-driven energy consumption will be a critical contributor to global CO2 levels, especially in regions where renewable power options are limited.

Environmentalists and policymakers are increasingly vocal about the need for greater transparency regarding energy usage in AI models. The true “energy cost” of an AI innovation must start factoring into how companies, governments, and individuals measure the benefits of artificial intelligence. Only by acknowledging this shadow footprint—and working to shrink it—can we hope to make progress toward a sustainable digital future.

Why Are Large Language Models Accelerating Power Consumption AI?

Model Training and Its Impact on Energy Demand

Model training for large language models is infamous for being energy-intensive. Unlike small applications that might run in the background of a smartphone, creating a competitive AI model involves weeks or even months of round-the-clock computation on specialized hardware. Electricity demand peaks during training, especially for the biggest advances in natural language processing and computer vision.

Training versus inference: Where is the bulk of electricity demand?

The growing resource requirements of large language models

Most of the energy drain tends to occur during training, when models ingest massive datasets—often petabytes of text, images, or video. Once the model is deployed, ongoing “inference” also demands significant, though usually lower, energy. The gap between the two is narrowing, however, as AI is used increasingly in always-on applications like real-time translation and generative AI content. This relentless pace puts sustained pressure on power grids and infrastructure, creating an urgent push for smarter energy usage practices across the sector.

Scaling Language Models: Balancing Performance and Energy Usage

The ongoing race to develop bigger and better AI models means that every new generation demands more energy than the last. “Scaling up” a model doesn’t just increase performance—it leads to exponential spikes in electricity consumption. Quite simply, while doubling an AI model’s size may improve accuracy, it can quadruple the power demand, stretching data centers’ capabilities to the limit.

“Every doubling of AI model size can multiply its energy demand exponentially.”

Developers and engineers are beginning to focus on ways to balance this tradeoff. Strategies include model optimization (reducing unnecessary parameters or calculations), “pruning” less-used neural network connections, and exploring new data-efficient training techniques. However, the pressure to lead in AI performance often eclipses efficiency efforts, especially in highly competitive sectors. The challenge is clear: if we want smarter AI, we also need it to be greener—and fast.

The Energy Demand Dilemma: Mitigating the Impact of Power Consumption AI

Technological Innovations to Curtail AI Energy Usage

Advanced chips and hardware-efficient architectures

Smart data center cooling solutions

Green energy integration for AI workloads

Fortunately, new technologies are rising to meet the energy demand challenge. Next-generation AI chips—like those built with hardware-efficient architectures—slash electricity use by completing more calculations on less power. Companies are redefining the very structure of data centers: switching from energy-hungry air conditioning to targeted liquid-cooling solutions, wringing even more efficiency from every kilowatt-hour. Meanwhile, green energy is gaining ground. Solar and wind installations are transforming how AI data centers source electricity, especially in places like the United States and Europe.

The International Energy Agency notes that the integration of renewables into AI center operations is expected to accelerate in the coming years, easing the burden on the power grid and cutting the sector’s environmental impact. However, sustained investment, clear policy guidance, and a willingness to prioritize sustainability over speed are crucial for real progress.

Industry Efforts: Reducing Environmental Impact in Data Centers

The push for greener AI isn’t just happening at the technology level. Industry leaders are coming together to establish new standards and common goals. Tech giants now regularly report their energy sources, publicize investments in environmental research, and pledge to achieve net zero emissions from all data centers in the coming decade. Companies are reconsidering where they build new facilities—selecting regions with the cleanest energy mix or natural advantages for cooling.

In addition, several high-profile collaborations are forging best practices in everything from data server stacking to water reuse and waste heat recovery. Nonprofits and regulators are weighing in too, calling for consistent metrics to measure energy usage. As the momentum grows, so does hope for a future where power consumption AI can actually lead the way in climate-friendly, low-energy innovation.

Power Consumption AI and the Broader Societal Implications

Real-World Effects of Increased Energy Usage from Artificial Intelligence

The consequences of unchecked AI data center growth ripple far beyond industry circles. As power-hungry data centers spring up near cities, they sometimes outcompete local residents and businesses for available electricity—occasionally requiring grid upgrades or new energy sources. Rural communities may see land and water resources diverted to massive server warehouses. In some cases, higher electricity demand from AI even translates into increased rates for families and small businesses, sparking debate about who should bear the cost of innovation.

On a global scale, countries with advanced digital economies are racing to secure clean power and build smart, resilient infrastructure. The flipside is stark: areas reliant on fossil fuel–driven grids may see mounting environmental impact and emissions, deepening inequality in environmental progress. As artificial intelligence touches more facets of our lives, responsible energy stewardship is becoming a matter of broad public interest and ethical responsibility.

Regulatory Pressures and the Push for Efficiency in AI

Governments and international agencies are starting to respond to the energy impact of AI center growth. In the EU, new rules will require all data centers—including those used primarily for AI models—to disclose their energy consumption and emissions. In the United States, energy agencies are developing incentives for AI workloads built on renewables and stricter emissions standards for digital infrastructure. The global “green AI” movement is quickly becoming a mainstream topic in both policy and boardrooms.

These pressures are reshaping the industry, driving investments in energy-efficient technologies, and sparking creative solutions to the energy demand dilemma. The journey toward truly sustainable, low-impact power consumption AI is only beginning, but the signs of real change are finally coming into focus.

Watch: An animated explainer video shows how modern data centers leverage advanced cooling techniques, AI-optimized hardware, and large-scale renewable energy systems to manage power consumption. The visuals provide a behind-the-scenes look at virtual server rooms, detailed energy schematics, and step-by-step guides to smarter, more efficient AI deployment.

People Also Ask About Power Consumption AI

How much power does AI consume?

AI’s energy consumption ranges from a few watts for small applications to megawatts for advanced model training in large language models, often rivaling the total consumption of small towns, especially in major data centers.

Does AI take a lot of power to run?

Yes, power consumption AI is significant, especially for training and running large AI models. The energy usage can be substantially higher compared to traditional computing workloads.

What is the 30% rule in AI?

The '30% rule' suggests that up to 30% of the operational costs for artificial intelligence projects in enterprise can be attributed to electricity consumption.

Is AI really making electricity bills higher?

Absolutely. The rapid adoption of power consumption AI and growth in energy demand are raising electricity bills for both data centers and cloud service providers.

Key Takeaways on Power Consumption AI and Data Center Energy Consumption

AI and data centers are a driving force in surging global energy consumption

The environmental impact of artificial intelligence cannot be ignored

Smart investments and regulations are required to curb the energy drain

FAQs on Power Consumption AI, Data Centers, and Future Trends

Q: What are some emerging solutions to reduce power consumption AI in data centers? A: Advanced energy-efficient chips, liquid cooling, renewable energy sourcing, and AI workload optimization are key innovations reshaping data center design and operation for sustainability.

Q: Can renewable energy completely offset the environmental impact of AI data centers? A: While renewables can significantly reduce emissions, full offset depends on regional power mix, data center location, and the pace of green infrastructure development worldwide.

Q: Is AI energy consumption expected to plateau anytime soon? A: No; as generative AI and advanced language models proliferate, energy demand is expected to escalate further, requiring even more aggressive efficiency strategies.

Power Consumption AI: Final Thoughts and A Vision for Responsible Energy Usage

As AI transforms the world, its power consumption must become a central consideration. Prioritizing efficient design, responsible policy, and clean energy is the path to unlocking AI’s benefits—without burning out our planet.

Take the Next Step Toward Smarter Energy: Buy Your New Home With Zero Down Reach Solar Solution

Ready to reduce your footprint, save on electricity, and power your future? Buy Your New Home With Zero Down Reach Solar Solution and be part of a smarter, more sustainable tomorrow!

Ready to Make a Change? Check Out the Reach Solar Review: https://reachsolar.com/seamandan/#about

Buy Your New Home With Zero Down Reach Solar Solution: https://reachsolar. com/seamandan/zero-down-homes

Sources

Nature – Energy and Policy Considerations for Deep Learning in NLP

Data Center Dynamics – How Much Energy Does the World’s AI Use?

Scientific American – Artificial Intelligence Has a High Hidden Cost

Bloomberg – AI’s Electricity Demand Drives a Search for Better Chips

If you're interested in the intersection of energy, technology, and policy, consider exploring how political actions can shape the future of clean energy jobs and infrastructure. The article on why political actions threaten offshore wind jobs in America offers a broader perspective on the challenges and opportunities facing the renewable sector. By understanding these larger forces, you can gain a more holistic view of how sustainable energy solutions and AI advancements are deeply interconnected—and why proactive engagement is essential for a greener, more resilient future.

The energy consumption associated with artificial intelligence (AI) is a growing concern, with AI systems consuming approximately 1. 5% of global electricity in 2024, a figure projected to more than double by 2030. (allaboutai. com) This surge is largely due to the intensive computational power required for training and operating large AI models. For instance, training OpenAI’s GPT-4 model consumed over 50 gigawatt-hours of electricity, enough to power San Francisco for three consecutive days. (livescience. com) Additionally, AI data centers are significant water consumers, using billions of gallons annually for cooling purposes. (en. wikipedia. org) To address these challenges, the industry is exploring solutions such as developing energy-efficient hardware, implementing advanced cooling techniques, and integrating renewable energy sources into data center operations. (forbes. com) These efforts aim to mitigate the environmental impact of AI’s growing energy demands. For a visual overview of how AI is driving massive power consumption, you might find the following video insightful: How AI is Driving Massive Power Consumption

Write A Comment