Did you know that data centers are projected to consume almost 8% of global electricity by 2030? High-performance computing energy demands are forcing an urgent re-evaluation of current practices. This unprecedented surge in energy consumption is not just a technical issue—it’s an economic and environmental turning point. As organizations and individuals depend ever more on data centers and computing centers for everything from financial analysis to artificial intelligence, the pressure to optimize high-performance computing energy and costs has never been greater. In this opinion-based guide, you’ll discover how energy efficiency and energy innovation within HPC (high-performance computing) can drive immediate and lasting cost savings.

A Surprising Look at High-Performance Computing Energy and Cost Efficiency

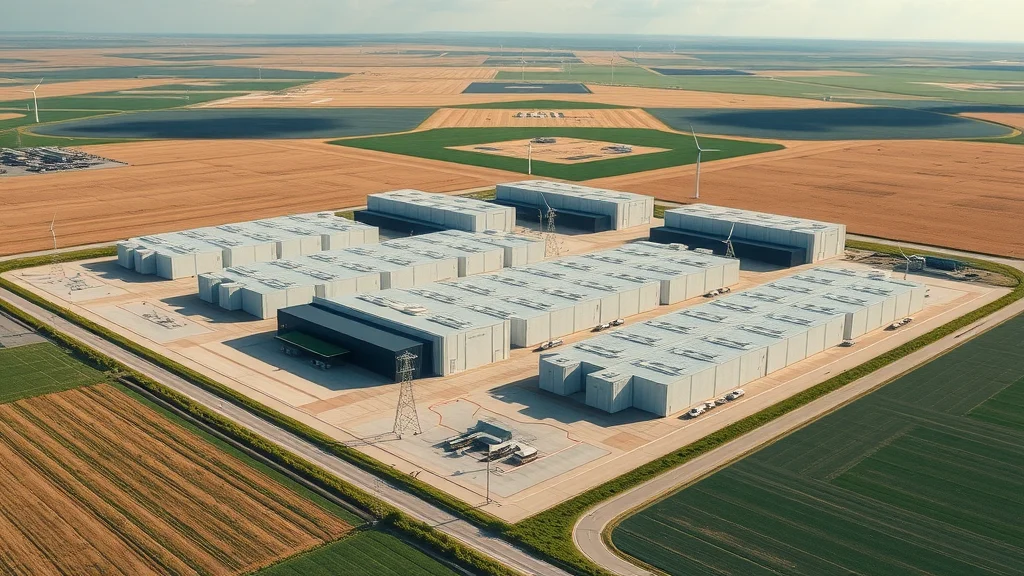

As technology rapidly evolves, high-performance computing energy usage is skyrocketing. Data centers have become essential infrastructure worldwide, supporting everything from weather prediction and advanced research to financial analytics and streaming entertainment. Yet, behind all of these conveniences is a silent but significant issue: the vast amount of energy consumed by these massive computing centers. In the quest for computational power, organizations often overlook the mounting energy bills and environmental toll. This is where the real opportunity lies—cutting edge energy efficiency measures and new energy innovations can yield substantial energy and cost savings while keeping essential services running reliably.

"Did you know that data centers are projected to consume almost 8% of global electricity by 2030? High-performance computing energy demands are forcing an urgent re-evaluation of current practices."

New research and industry reports show an urgent need to rethink current high performance computing practices. Energy innovation is rapidly becoming the differentiator that determines whether data centers, research communities, and HPC application providers can thrive, both financially and ethically, in the coming years. By fully understanding and leveraging the relationship between power consumption, parallel computing strategies, and renewable energy implementation, organizations can transform today’s risks into tomorrow’s competitive advantages.

For organizations seeking to further optimize their energy strategies, it's important to recognize how external factors—such as policy changes and political actions—can impact the broader energy landscape. For example, shifts in government priorities have had significant effects on renewable energy sectors, as seen in the impacts of political decisions on offshore wind jobs and the future of sustainable infrastructure.

What You'll Learn About High-Performance Computing Energy

The financial and environmental impact of high-performance computing energy

Latest trends in energy efficiency for performance computing

Breakthroughs in energy innovation for HPC applications

Opinion-based perspectives on driving down energy costs in computing centers

Defining High-Performance Computing Energy in Modern Data Centers

What is HPC in energy?

High-performance computing (HPC) in energy refers to the massive compute resources required to power complex, intensive computational workloads. These workloads include climate simulation, seismic imaging for oil and gas, energy market analysis, and development of advanced materials. In essence, an HPC infrastructure is a network of powerful servers—often housed in data centers—that can process trillions of calculations per second. All this raw power comes with significant energy consumption: the amount of energy required to run, cool, and maintain these computational giants is substantial, sometimes representing a significant chunk of an organization’s operational costs.

This means that the energy consumed by HPC systems is not just about compute performance—it’s closely tied to data center design, hardware choices, and the efficiency of cooling systems. The department of energy and national laboratories in the United States, as well as many global gov websites, frequently stress the need for upgrading existing computational resource infrastructure. By deploying innovative technologies, data centers can minimize the power consumption required for high performance tasks while maximizing both energy and cost savings and performance reliability—crucial as energy prices fluctuate and climate concerns intensify.

How High-Performance Computing Energy Drives Performance Computing

The success of performance computing relies on delivering computational resources fast and at scale, which inherently impacts the amount of energy used. For every advancement in compute speed—whether for scientific research or financial modeling—energy consumption rises accordingly. However, energy efficiency breakthroughs are enabling these systems to consume more power for essential operations without a corresponding spike in total energy use. Advances such as liquid cooling systems, AI-driven workload management, and custom hardware for HPC applications are all shifting the balance toward greener, more responsible computing.

Data centers leading the charge have begun harnessing renewable energy sources, intelligent cooling solutions, and parallel computing techniques to ensure superior performance without unsustainable energy bills. Thus, as high-performance computing becomes more central to every technology-driven field, its energy footprint can be intelligently managed—turning previously wasteful practices into a wellspring of energy and cost savings for businesses and society alike.

High-Performance Computing Energy: Real-World Examples and Key Applications

What are some examples of HPC?

Real-world hpc applications span every major industry. In the energy sector, high-performance computing energy is essential for modeling oil and gas reservoirs, optimizing wind and solar deployments, and running simulations for energy grid reliability. The research community leverages HPC for breakthroughs in medical imaging, genomics, and drug discovery. National laboratories, such as those managed by the department of energy, use HPC to predict climate changes, simulate nuclear interactions, and test new materials without expensive prototypes. The data center landscape powering cloud computing and large-scale AI is itself heavily reliant on robust, energy-efficient infrastructures. All these use cases underscore why minimizing energy consumption in HPC systems is critical for cost savings, sustainability, and operational excellence.

HPC Applications: Powerhouses of Data Centers

Inside today’s top-tier computing centers, high performance computing environments are the heart of progress. Whether running multi-petabyte data analytics platforms, providing real-time financial data feeds, or modeling energy-efficient engines for the automotive industry, energy consumption and management remain top priorities for IT teams. Data centers supporting these environments are constantly evolving: enhancing energy innovation through advanced cooling, parallel computing frameworks to optimize workloads, and shifting toward renewable energy sources to relieve grid pressure.

Watch: A dynamic walkthrough of a high-performance computing center as technicians monitor sophisticated servers and visual overlays highlight real-time power consumption, revealing the intersection of technology, energy, and innovation within the latest computing centers.

Is HPC the Same as Quantum Computing? Key Differences in Energy Use

Is HPC the same as quantum computing?

While both high-performance computing and quantum computing are at the frontier of computational science, the two are fundamentally different in how they operate and consume energy. HPC relies on traditional silicon-based architectures using CPUs and GPUs in tightly interconnected networks—think campus-sized data centers or university supercomputers. Quantum computing, on the other hand, harnesses the unique properties of quantum bits (qubits), potentially promising greater efficiency for certain complex problems but currently restricted by scalability and stability hurdles.

Despite the hype, quantum computing is still in its infancy, especially regarding reliability and scalability. HPC systems, meanwhile, are the established workhorses driving most enterprise, research, and government-level performance computing for energy initiatives. Thus, energy efficiency in the quantum era will depend on combining the best of both worlds: using HPC for traditional high-throughput workloads while developing quantum systems for new frontiers in cryptography, modeling, and AI.

Comparing Energy Efficiency in Performance Computing and Quantum Computing

When it comes to energy consumption, traditional HPC systems generally use significant power—requiring sophisticated cooling, constant power draws, and redundancy for fault tolerance. Quantum computing, by contrast, uses much less energy per operation (per qubit) but relies on highly specialized environments, often requiring extreme cooling and strict isolation. While the energy requirements of scalable quantum hardware are yet to be fully realized, today’s data center operators must focus now on energy efficiency in classical HPC systems, as those account for nearly all computational resource demands today.

Aspect |

HPC |

Quantum Computing |

|---|---|---|

Energy Use |

Very High |

Low (per qubit, but still early) |

Scalability |

Excellent |

Emerging |

High-Performance Computing Energy in the Stock Market: A Game-Changer

What is HPC in the stock market?

The world’s leading financial markets run on data, and high-performance computing energy is the force behind lightning-fast trades, real-time risk analysis, and sophisticated fraud detection. Modern trading floors and hedge funds operate sprawling computing centers to process millions of transactions each second. This immense computational demand translates into substantial energy consumption, making energy efficiency both a competitive advantage and a financial imperative. The energy and cost savings achieved through optimized hpc applications enable firms to invest more in innovation, analytics, and customer value rather than ballooning utility bills.

Performance computing for energy in the finance sector is also helping revolutionize portfolio management, forecasting global economic trends, and simulating market volatility. By embracing smarter data center operations—from efficient cooling to renewable energy integration—financial institutions can meet regulatory standards, reduce their environmental footprint, and protect profit margins in a fast-changing digital landscape.

Opinion: Why Energy Efficiency Must Drive Performance Computing

"Adopting energy efficiency within performance computing isn’t just smart business—it's a social and ecological imperative."

The Environmental Cost of Inefficient Computing Centers

Inefficient computing centers are now one of the world’s fastest-growing contributors to global energy demand. The tremendous power consumption seen in older data center designs not only weighs down IT budgets but also accelerates environmental risks. In my opinion, continued reliance on outdated high performance computing energy architectures is unsustainable—both economically and ecologically. Modern performance computing for energy must be reimagined with climate and society in mind, not just computational throughput.

Official websites for leading department of energy programs, along with insights from the United States Environmental Protection Agency, increasingly emphasize the urgency of deploying energy innovation at scale. Energy efficiency is now the difference between progress and preventable harm, between accelerating economic performance and risking avoidable resource waste.

Energy Innovation: Leading the Charge for Smarter Data Centers

Leaders in the energy sector and computational science are pioneering smarter, cleaner computing center solutions. By investing in hpc infrastructure upgrades, artificial intelligence-driven resource allocation, and hybrid energy sourcing, organizations can cut waste and assure uninterrupted growth. My stance is clear: energy innovation—fueled by rapid research, government incentives, and consumer demand—must become the central axis of any modern HPC strategy, ensuring every petaflop of compute delivers maximum business and societal value for minimum environmental cost.

Watch: Explore how data centers are integrating solar, wind, and intelligent energy management to become eco-friendly—reducing their carbon footprint while sustaining world-class performance.

Top Strategies to Cut High-Performance Computing Energy Costs

Utilizing renewable energy (solar, wind) in computing centers

Adopting advanced cooling and airflow management

Leveraging AI-driven resource optimization

Transitioning to energy-efficient hardware for HPC applications

Employing parallel computing to reduce redundant power draws

Applying these strategies in tandem can help businesses and research institutions realize significant energy and cost savings. For instance, implementing parallel computing frameworks distributes workloads more efficiently, minimizing unnecessary resource use. Renewable energy integration cuts operational costs and aligns with sustainability goals, while new hardware can deliver higher compute density with lower power consumption. This holistic approach is critical to future-proofing data centers in an era of mounting energy and cost pressures.

Frequently Asked Questions on High-Performance Computing Energy

What is high-performance computing energy?

It’s the capacity required to power complex, intensive computational workloads across various industries using advanced data center architectures.How can I improve energy efficiency in my HPC systems?

Implement cutting-edge cooling, upgrade hardware, integrate renewables, and invest in smarter scheduling algorithms.What trends are shaping energy innovation in performance computing?

AI-driven management, edge computing, and increased renewable energy integration.

Key Takeaways: The Future of High-Performance Computing Energy

High-performance computing energy is escalating in cost but can be curtailed with innovation.

Data centers must prioritize energy efficiency to remain viable—environmentally and financially.

Adoption of energy innovation is already reshaping the industry.

Final Thoughts on High-Performance Computing Energy

Now is the time to reimagine your computing center: with each efficiency gain, you future-proof operations, minimize waste, and help build a truly sustainable digital world.

As you consider the future of high-performance computing energy, it's clear that the path forward is shaped not only by technology but also by the broader forces influencing the energy sector. Political decisions and policy shifts can dramatically alter the landscape for renewable energy and sustainable infrastructure, impacting everything from job creation to the viability of new projects. To gain a deeper understanding of how these external factors play a pivotal role, explore the far-reaching effects of political actions on offshore wind jobs in America. This perspective will help you anticipate challenges and opportunities as you drive innovation and resilience in your own energy and computing strategies.

Ready to Take the Next Step?

Ready to be part of the solution?

Ready to Make a Change? Check Out the Reach Solar Review: https://reachsolar.com/seamandan/#about

Buy Your New Home With Zero Down Reach Solar Solution: https://reachsolar. com/seamandan/zero-down-homes

Sources

Data Center Frontier – https://datacenterfrontier.com/energy-datacenter-trends

U.S. Department of Energy – https://www.energy.gov/eere/datacenters/energy-efficient-data-centers

U.S. Environmental Protection Agency – https://www.epa.gov/greencomputing

High-performance computing (HPC) is pivotal in advancing energy research and innovation. The U. S. Department of Energy’s High Performance Computing for Energy Innovation (HPC4EI) program exemplifies this by offering up to $400,000 per industry-led project, along with expertise from national energy laboratories, to enhance manufacturing efficiency and explore new materials for energy applications. (iea. org) Additionally, the National Renewable Energy Laboratory (NREL) has significantly expanded its supercomputing capacity with the Kestrel system, boasting 44 petaflops of computing power. This advancement has propelled over 425 energy research projects in 2024, accelerating progress in areas such as artificial intelligence, materials science, and energy forecasting. (nrel. gov) These initiatives underscore the critical role of HPC in driving energy efficiency and innovation.

Write A Comment