Did you know that training a large AI model can emit as much carbon as five cars in their lifetime? The rise of artificial intelligence isn’t just about smarter machines—it’s also fueling a surge in global energy consumption. From the server racks in data centers to the cooling systems that keep them operational, the energy usage in AI is shaping up to be one of the most critical challenges in the tech world today. In this article, we’ll break down what drives AI’s energy demand, the environmental impact, and what you can do to help shape a more sustainable digital future.

The Startling Reality of Energy Usage in AI

"Did you know that training a large AI model can emit as much carbon as five cars in their lifetime?" – Exploring the environmental impact of artificial intelligence.

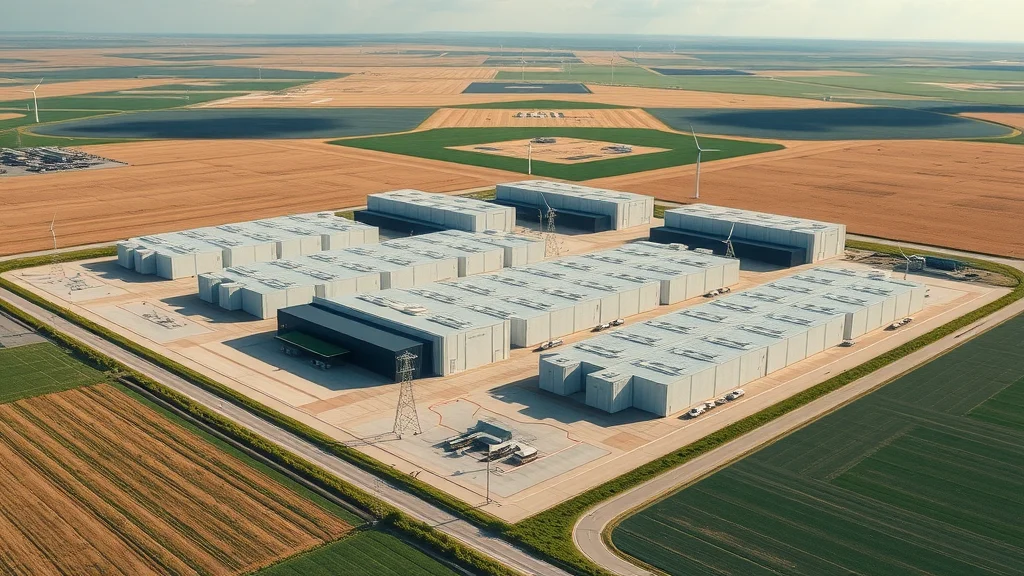

Artificial intelligence (AI) breakthroughs have paved the way for incredible innovations—think powerful language models and generative AI art. But beneath the surface, there’s a hidden cost: energy. Recent findings reveal that some state-of-the-art AI models require so much electricity during training that their total carbon footprint is greater than several household cars over their entire operational life. AI model training is no longer a niche experiment; it’s a massive industrial process occurring in sprawling data centers filled with advanced processing units and vast banks of servers.

The global energy consumption of AI is rising fast. Tech giants and startups alike now operate data centers that are among the largest on the planet, drawing power comparable to small cities. While these facilities are essential for training and serving AI models, their environmental impact is substantial—especially when fueled by fossil power plants or in regions with dirty energy grids. As dependence on AI grows, understanding and addressing the true scope of energy usage in AI is becoming as important as innovating smarter algorithms.

What You'll Learn About Energy Usage in AI

Key statistics on energy consumption in AI

Impacts of data centers and data center energy

Future trends and predictions for energy demand in AI

Strategies for reducing energy usage in AI

Frequently asked questions on electricity consumption and AI model efficiency

Expert opinions on environmental impact

Understanding Energy Usage in AI: Definitions and Scope

Artificial Intelligence, Data Centers, and Energy Consumption

Artificial intelligence refers to the software and systems designed to simulate human-like decision-making and processing. But powering AI—especially the latest AI models—requires a physical backbone: data centers. These are massive buildings packed with servers, storage units, and sophisticated cooling systems that keep the computers from overheating. As AI continues to scale, these data centers experience increasing center energy and energy consumption, often measured in megawatts and sometimes even gigawatts of electricity daily.

What makes AI stand out in the realm of technology is the sheer intensity of its computational needs. Training advanced ai models like those behind voice assistants or image recognition tools involves running billions—sometimes trillions—of calculations repeatedly for days or even weeks. Each step consumes significant electricity, not only to process AI data but to manage cooling and infrastructure operations too. As more businesses integrate AI into their products and services, the scope of energy usage in AI continues to expand, making its management and optimization all the more urgent.

As organizations seek to optimize their AI operations, it's important to recognize that the energy challenges facing artificial intelligence are not unique—other sectors, such as renewable energy and offshore wind, are also navigating the intersection of technology, policy, and sustainability. For a closer look at how political decisions can impact the growth and job market of clean energy industries, see the analysis on how political actions threaten offshore wind jobs in America.

Electricity Consumption and AI Model Requirements

For every smart chatbot or search engine that deploys artificial intelligence, there is an invisible chain of electricity at work. Modern AI models are so complex that they require many powerful graphics processing units (GPUs) and high-end servers. As each model becomes more advanced, its electricity consumption surges. A recent study by the International Energy Agency found that certain AI workloads in leading data centers now account for a growing share of site-wide power usage.

Furthermore, each stage of AI development—from data preprocessing to actual model training and final inference—draws electricity, contributing to the overall energy demand of AI. AI model requirements keep evolving upward as companies race to develop bigger, better models. As a result, power plants and grids are forced to adapt, sometimes leading to increased reliance on fossil fuels, which exacerbates the environmental impact. Understanding these demands underscores why energy efficiency has become a top focus for both AI researchers and data center engineers worldwide.

The Growing Energy Demand of AI: Data Centers and Energy Consumption

Exploring Demand from Data Center Energy

As AI adoption explodes, the demand from data center energy is climbing at an unprecedented pace. Every chatbot query, image analysis request, or automated translation runs computation-intensive tasks inside purpose-built data centers. According to a recent energy agency report, global data centers—driven by artificial intelligence and cloud computing—now consume more than 3% of the world’s electricity. Most of this energy powers not just AI data processing but also server cooling, redundancy, and backup systems.

AI-specific data center growth is driving innovation and pressure on local power infrastructures. As new models emerge and deployments increase globally, electricity demand from data centers continues to surge. This is especially pronounced in areas like the United States, where major tech companies cluster their infrastructure, and in rapidly industrializing economies, where energy resources can be more limited or reliant on fossil fuel power plants. It’s no wonder that industry leaders are sounding the alarm about runaway energy usage in AI.

"AI alone could account for up to 10% of global electricity demand by 2030." – Industry Analyst

Electricity Demand and the Growth of Data Centers

The increasing complexity and adoption of AI workloads have pushed electricity demand across the entire technology sector. Modern data centers—the main engine rooms for training and serving AI models—require enormous amounts of reliable and clean power to function effectively. As more tech companies race to make AI models bigger and faster, the infrastructure required to support this growth is stretching both energy grids and environmental resources.

Today, leading-edge AI data centers often seek locations near renewable energy sources such as hydroelectric, wind, or nuclear power plants to secure cleaner and more stable electricity flows. However, the global trend suggests that most facilities still rely heavily on conventional electricity grids, many of which depend on fossil fuels. This fuels debates within the industry and among environmental advocates about how to balance the undeniable power of AI-driven innovations with responsible and forward-thinking energy management strategies.

Environmental Impact of Energy Usage in AI

Carbon Footprint and Sustainability Challenges

With every watt consumed by AI data centers comes a corresponding carbon output, especially when energy comes from coal, natural gas, or oil-burning plants. The carbon footprint of AI development can be substantial—training a single large language model, for example, can emit more CO2 than the annual output of several vehicles combined. This rapid escalation in energy usage and emissions is raising alarms among climate advocates and policymakers, who warn that unchecked growth in AI computing power could set back global efforts to curb greenhouse gases.

The challenge is twofold: not only must the industry address the direct emissions from electricity consumption, but it must also manage the indirect environmental impact—everything from water used in data center cooling systems (often millions of gallons per year) to the electronic waste created by constantly upgrading hardware. As energy demand steadily increases, so does the need for sustainable solutions that address both efficiency and environmental responsibility. The tech sector finds itself at a crossroads, needing to innovate faster than ever—not just in algorithms but in eco-friendly infrastructure.

Renewability and Green Data Centers for Artificial Intelligence

One increasingly popular solution is the shift toward renewable energy and green data centers. Inspired by mounting consumer and regulatory pressure, several leading tech companies now pledge to power their AI operations entirely with green energy—from solar farms to wind turbine arrays. The use of onsite or grid-delivered renewable electricity can sharply reduce the environmental impact of AI workloads, helping to offset the emissions from fossil-fueled power plants.

Building a truly green data center, however, requires more than just switching electricity sources. Everything from the hardware supply chain to the design of ultra-efficient cooling systems must be optimized for sustainability. Innovations such as liquid immersion cooling, advanced AI-driven facility management, and the use of low-impact construction materials are transforming the way data centers operate. While these eco-friendly approaches show promise, scalability and high up-front costs remain barriers to industry-wide adoption. Nevertheless, it’s clear the future of energy usage in AI must hinge on renewability to remain viable in a climate-conscious world.

Deconstructing Energy Consumption in AI Model Training

Energy Demand for AI Model Training and Inference

The most energy-intensive step in the AI lifecycle is model training. This is where AI models, especially the generative AI and large-scale neural networks, process massive datasets for days or even weeks on hundreds or thousands of interconnected graphics or processing units. Studies from the International Energy Agency and leading research universities indicate that electricity consumption during this stage can match the yearly usage of entire small towns. The intense computation not only burns through megawatts but also generates heat, requiring complex cooling systems that further add to electricity demand.

Yet, even after training, the energy story doesn’t end—inference (using a trained AI model to make predictions or process new data) also draws significant power, especially at scale. Think of the billions of search queries, voice commands, or image analyses processed each day; every output depends on a background web of data center activity, contributing to global energy consumption. As AI applications proliferate, optimizing both training and inference stages for energy efficiency is essential to sustaining future growth without overwhelming power infrastructures or the planet.

Comparing Energy Usage in AI With Other High-Tech Sectors

How does energy usage in AI stack up against other sectors? Compared with traditional IT workloads—such as cloud storage or standard business computing—AI demands significantly more electricity due to the computational intensity of training and model updates. Recent benchmarks show that a single large generative AI model can consume as much power during its construction as an entire hospital, while maintaining and serving that same model draws power at rates comparable to streaming video platforms or cryptocurrency mining.

Even as the energy profile of AI rivals and sometimes surpasses these high-tech sectors, the added complexity of specialized hardware, advanced cooling, and round-the-clock operations compounds its impact. This context is crucial for policymakers and industry leaders as they seek to balance investment in AI innovation with sustainable development. As the technology matures, lessons from sectors like cloud computing and green IT could inform more responsible and efficient energy management practices for the future of AI.

The Economics of Energy Usage in AI

Cost Breakdown: Data Center Energy and Electricity Consumption

Running advanced AI data centers comes with substantial electricity costs—sometimes accounting for more than half of overall operational expenses. Beyond direct electricity consumption for servers and network equipment, there are costs for infrastructure maintenance, cooling, and backup systems. These energy-related expenses have a direct impact on the total cost of ownership for ai model development and deployment.

Energy efficiency thus becomes a competitive differentiator. Tech companies are constantly looking for ways to optimize model architectures, choose lower-power hardware, and invest in on-site renewables to lower electricity bills and carbon footprints. The ongoing arms race for the most efficient model and center is as much about economics as it is about environmental impact. Data-driven decision-making—and even automated AI assistants running the facility—are being deployed to monitor data center energy, cut waste, and enable sustainability.

Cost Comparison of Data Center Energy Consumption for Typical AI Models |

|||

AI Model Type |

Training Cost (USD) |

Electricity Use (MWh) |

CO2 Emissions (tons) |

|---|---|---|---|

Small NLP Model |

$30,000 |

50 |

20 |

Large Language Model |

$1,000,000+ |

1,287 |

552 |

Image Recognition Model |

$65,000 |

112 |

45 |

Lists: Strategies to Reduce Energy Usage in AI

Algorithmic optimization for less electricity consumption

Low-power data center design

Sourcing renewables for electricity demand

AI model efficiency enhancements

Data center cooling innovations

Expert and Industry Perspectives on Energy Usage in AI

"Building more efficient data centers is crucial for curbing the growing energy consumption of artificial intelligence." – Data Center Specialist

Industry experts underline that, as AI data centers proliferate, only aggressive energy efficiency measures can keep electricity usage in check. AI researchers and sustainability consultants advocate for everything from smarter scheduling of AI workloads to direct investment in clean energy infrastructure. Diverse voices—including government policymakers, engineers, and environmentalists—argue that collaboration across sectors will be required to make meaningful progress.

Leading tech firms are racing to pilot promising solutions, such as AI-driven cooling controls and predictive analytics for optimal power management. Real-world case studies show that even modest improvements in server utilization or cooling efficiency can deliver outsized reductions in both costs and carbon emissions. As public scrutiny of tech sector energy consumption grows, expect increased calls for transparency, reporting, and stricter energy standards from regulators, investors, and customers alike.

Watch:Animated explainer video – How Data Centers & AI Consume Energy

Visual walkthrough of digital servers, AI model training, and sustainable solutions like renewable energy—concise, modern animation with blue-green highlights.

Key Takeaways on Energy Usage in AI

AI energy usage is scaling rapidly

Data center energy consumption contributes significantly to global electricity consumption

Efforts to mitigate environmental impact are ongoing but challenging

Innovations in AI model design can directly reduce energy demand

People Also Ask: Core Questions on Energy Usage in AI

How much energy is AI actually using?

Answer: AI's energy usage depends on the scale of models and the number of data center operations involved. For example, training state-of-the-art AI models can use as much electricity as a small town consumes in a year, with substantial portions attributed to data center energy consumption and electricity demand.

What is the 30% rule in AI?

Answer: The "30% rule" in AI refers to projections that data center energy demand and related AI workloads could consume up to 30% of a facility's total electricity consumption in the near future, highlighting the urgent need for improved energy efficiency.

How is AI being used in energy?

Answer: AI is widely applied in energy sector optimization, from balancing electricity grids to forecasting energy demand, and automating data centers for greater efficiency, which ultimately can reduce overall energy consumption.

How much energy will AI consume by 2030?

Answer: Projections suggest that energy usage in AI could account for up to 10% of global electricity demand by 2030, driven primarily by the growth in data centers, increasing AI model complexity, and persistent demand for computational resources.

FAQs: Energy Usage in AI

What proportion of data center energy is consumed by AI workloads?

How do cloud computing platforms manage AI energy usage?

Is renewable energy a viable solution for future data center electricity consumption?

What are some benchmarks for eco-friendly AI model development?

Final Thoughts: Shaping a Sustainable Future for Energy Usage in AI

The Critical Role of Innovation and Policy in Reducing Energy Consumption

Reducing energy usage in AI will require more than technological tweaks—it will demand coordinated innovation, committed policy action, and a shared responsibility across industries. By making deliberate choices now, we can ensure that the promise of AI doesn’t come at the cost of our planet’s future.

As the conversation around energy usage in AI continues to evolve, it's clear that the path forward will be shaped by both technological breakthroughs and the broader policy landscape. If you're interested in understanding how external factors—like government decisions and regulatory shifts—can influence the future of sustainable industries, exploring the dynamics of offshore wind jobs in America offers valuable perspective. Discover how political actions can impact clean energy progress and workforce opportunities by reading the in-depth analysis on the impacts of political actions on offshore wind jobs. Gaining insight into these interconnected challenges can help you anticipate the next wave of innovation and advocacy needed for a truly sustainable future.

Check Out the Reach Solar Review for More Sustainable Insights

Ready to make the shift to sustainable living? Buy Your New Home With Zero Down Reach Solar Solution:

Ready to Make a Change? Check Out the Reach Solar Review: https://reachsolar.com/seamandan/#about

Buy Your New Home With Zero Down Reach Solar Solution: https://reachsolar. com/seamandan/zero-down-homes

Sources

Nature Physics – https://www.nature.com/articles/s42254-019-0088-2

Energy and Policy Considerations for Deep Learning – https://arxiv.org/abs/1906.02243

The energy consumption associated with artificial intelligence (AI) is a growing concern, with AI operations projected to account for nearly half of data center power usage by the end of 2025. (theguardian. com) This surge is driven by the computational demands of training and running large AI models, which require substantial electricity and cooling resources. To address these challenges, companies are exploring sustainable energy solutions. For instance, Google has partnered with Elementl Power to develop advanced nuclear energy sites, each expected to generate 600 megawatts of power, aiming to provide carbon-free electricity for its expanding AI operations. (apnews. com) Similarly, Microsoft has invested in nuclear power to meet the growing energy demands of its AI data centers. (en. wikipedia. org) Understanding the environmental impact of AI is crucial for developing strategies to mitigate its carbon footprint. For a comprehensive overview of AI’s energy consumption and its implications, you can refer to the Wikipedia article on the environmental impact of artificial intelligence. (en. wikipedia. org) By staying informed and supporting sustainable practices, we can help shape a more energy-efficient future for AI technologies.

Ready to Make a Change? Check Out the Reach Solar Review: https://reachsolar.com/seamandan/#about

Write A Comment