Did you know? By 2025, it’s estimated that artificial intelligence infrastructure will power over 85% of global enterprise applications, reshaping industries faster than the internet itself did. This hidden backbone doesn’t just support your favorite apps or smart devices—it determines which companies innovate and which fall behind. Unlocking the secrets behind this technology could give your business a competitive edge that lasts for years.

Unveiling the Power of AI Infrastructure: A Surprising Starting Point

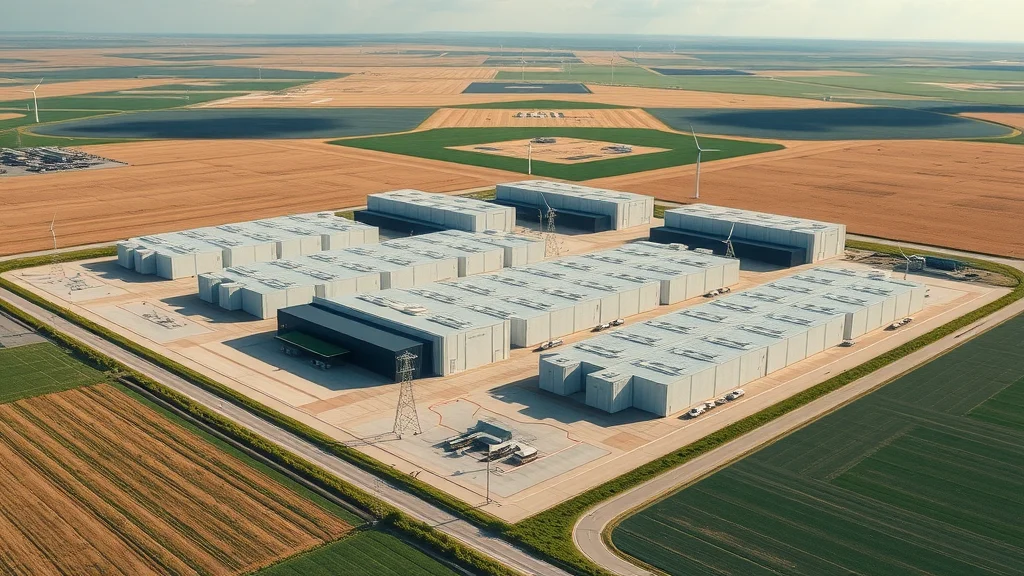

AI infrastructure isn’t just another buzzword—it’s the unseen power source enabling today’s technological leaps. From recommendation engines that learn your habits to the natural language models that fuel chatbots and digital assistants, AI infrastructure shapes how businesses deliver smarter, faster, and more reliable experiences to customers. But what’s most surprising is where it all begins: within vast, climate-controlled data centers humming with custom hardware and software built specifically for AI workloads.

Without the right AI infra, even the most promising AI models can bottleneck, draining resources and stalling development. As organizations handle ever-larger volumes of data, their need for specialized infrastructure—combining powerful GPUs, lightning-fast networking, and dynamic storage—has never been more urgent. Unlike traditional IT suites, modern AI infrastructure is fluid, adaptive, and uniquely crafted to tackle demands like model training and real-time inference. Whether you’re a CTO or a project lead, understanding how this infrastructure operates is no longer optional—it's a competitive necessity.

A Groundbreaking Statistic: The Backbone of Future Technology

Here’s an eye-opener: According to IDC, global spending on AI infrastructure will reach an astounding $80 billion by 2026—a figure rivaling entire national IT budgets. This spending isn’t wasted; it reflects the new reality that AI applications can revolutionize healthcare, finance, logistics, and more—but only if fueled by the right underlying systems. The days of relying on generic IT networks for machine learning tasks are over. Those investing proactively in specialized AI infra are setting themselves up to lead in the era of generative AI and foundation models.

As organizations evaluate their technology stack, it's crucial to recognize how external factors—such as political decisions—can also impact the broader landscape of tech infrastructure and job creation. For a closer look at how policy shifts can influence innovation and employment in emerging sectors, explore the impacts of political actions on offshore wind jobs in America.

Why AI Infrastructure Matters More Than Ever in the Age of Generative AI

With breakthroughs in deep learning and the explosive growth of language models, the demands on AI infrastructure have skyrocketed. It’s no longer just about having enough compute power—businesses now need infrastructure capable of handling real-time AI application deployment, seamless multi-cloud operations, and even edge AI adaptations. Generative AI tools, for instance, require tremendously robust systems to support model training, instant data processing, and secure, scalable inference tasks.

The rapid evolution of AI and ML frameworks means that static, rigid networks won’t cut it. Instead, companies must deploy adaptable, secure, and high-performance AI infrastructure that keeps pace with changing algorithms, data sets, and user needs. By doing so, they position themselves to take full advantage of disruptive innovations—before their competitors do.

What You'll Learn About AI Infrastructure

Core components of AI infrastructure shaping tomorrow’s innovations

How AI infra differs from traditional IT setups

The critical role of machine learning and deep learning in AI infrastructure

Determinants in choosing the best AI infrastructure provider

How advanced AI models alter infrastructure needs

Future trends in AI infrastructure for strategic advantage

Understanding AI Infrastructure: Foundations and Fundamentals

What is AI Infrastructure?

AI infrastructure is the complete set of hardware, software, data storage, and networking systems required to design, train, deploy, and scale artificial intelligence solutions. Unlike traditional IT infrastructure—which focuses on standard servers and basic enterprise software—AI infra is purpose-built for heavy parallel processing, high-speed data transfer, and rapid scaling to support everything from ai models and ml frameworks to autonomous vehicles and real-time applications. Top-tier AI infra ensures unstructured data and massive amounts of data can be processed for model training and inference without bottlenecking the ai workload.

At its core, the difference lies in how AI infrastructure manages the unique compute, storage, and networking needs of machine learning and deep learning—functionality that standard IT systems simply can’t match. As AI applications demand ever-more specialized resources, the foundation beneath them must be equally advanced and flexible, ensuring seamless integration and maximum performance.

The Evolution of AI Infra: From Data Storage to Advanced AI Models

In the early days, AI infrastructure revolved around simple data storage and central processing. But as AI and ML projects grew, so did the complexity and volume of data generated. Legacy systems designed for transactional workloads soon hit their limits, unable to keep up with large volumes of training data or the real-time needs of modern ai applications. The rise of GPU clusters and high-speed networking marked a turning point, enabling much faster parallel processing and unleashing a wave of innovation in model development.

Today, the evolution continues with new layers—cloud-native AI, edge computing, and integrated solutions for training and inference. As foundation models and generative AI reshape the landscape, enterprises now look for ways to future-proof their infra, ensuring it can handle the next leap in AI workload demands.

Key AI Infrastructure Components: Compute, Storage, and Networking

Modern AI infrastructure is built on three foundational pillars: Compute, Storage, and Networking. Compute power—now often driven by GPUs, TPUs, and specialized AI chips—delivers the intense parallelism needed for deep model training and inferencing. Data storage solutions must not only accommodate growing volumes of data, but also provide high-throughput access for rapid model training. Fast, reliable networking enables the seamless movement of data across nodes and to cloud or edge environments, making distributed AI possible.

The synergy between these elements is critical. If any one area—compute, storage, or network—is underpowered, your AI projects will encounter delays, reduced accuracy, and higher costs. Effective orchestration, automation, and real-time monitoring are essential for maintaining performance, security, and compliance across increasingly complex AI environments.

AI Infra in Machine Learning and Deep Learning Ecosystems

How Machine Learning Relies on Robust AI Infrastructure

Machine learning and deep learning tasks are uniquely demanding—they require tremendous compute, access to enormous and varied data sets, and the ability to rapidly train and deploy new models. A robust AI infrastructure makes all this possible by streamlining data processing, handling parallel processing of training operations, and supporting the sophisticated algorithms at the heart of modern ml models.

As AI and ML frameworks evolve, dependency on high-performance hardware grows. Specialized data storage, powerful accelerators, and fast interconnects enable smooth experimentation and rapid results. Without these, training a single neural network on standard IT systems could take weeks—while AI-optimized infra can deliver results in hours or even minutes.

The Interplay of Deep Learning and AI Infrastructure

Deep learning models, particularly those powering generative AI and foundation models, demand unprecedented resources. Training large language models and complex neural networks involves processing large volumes of unstructured data, making the right infrastructure essential for speed and success. AI infra must manage everything from data ingestion and labeling to distributed model training, supporting the intricate pipelines that turn raw data into actionable intelligence.

Enterprises that invest in scalable, high-speed AI infrastructure can iterate faster, test more thoroughly, and deploy innovations ahead of the curve. As deep learning applications accelerate across industries, having the right foundations in place is the difference between scalable success and project failure.

Watch: AI Infrastructure Animated Explainer: Foundations & Modern Needs

Core Features of Modern AI Infrastructure

Seamless Data Storage Solutions for AI Models

Modern AI models feed on data—often in staggering volumes. Seamless data storage solutions empower teams to ingest, manage, and process datasets of every kind, from structured to unstructured. State-of-the-art AI infrastructure leverages tiered storage, high-speed SSDs, and intelligent caching to ensure that ml models can access data quickly when needed for model training or real-time inference. These systems also automate backup, archiving, and disaster recovery, ensuring that mission-critical AI projects never lose their intellectual capital.

Unlike traditional data management platforms, AI-centric storage must balance performance, scalability, and cost-effectiveness—accommodating amounts of data that shift rapidly depending on project phase or model size. Cloud-native and hybrid solutions offer flexibility, while on-premises arrays deliver ultra-low latency for the most demanding tasks.

Training and Inference Capabilities: Ultra-fast Processing for ML Models

Core to AI infra is the ability to handle rigorous training and inference workloads—essential for both developing new ml models and running them at scale. Next-gen AI infrastructure integrates high-performance GPUs, TPUs, and custom accelerators built for parallel processing, drastically reducing the time and cost of model training. When it comes to inference tasks—where trained models deliver predictions in real-time—speed, consistency, and reliability are non-negotiable.

A well-optimized AI infra can harness hardware and software orchestration, dynamic resource allocation, and containerization to support AI workloads across public cloud, private cloud, and edge environments. This flexibility is how companies meet ever-changing market and customer requirements.

Scalability and Flexibility: Powering Generative AI and Foundation Models

Today’s generative AI and foundation models demand infrastructure that scales both vertically (more power per node) and horizontally (more nodes, often distributed globally). Advanced AI infrastructure can expand or contract on demand, enabling projects to ramp up for massive model training sessions and scale back for less intensive workloads—without breaking the budget.

Such scalability supports not only current needs but future growth as well, giving organizations the agility to pivot as new opportunities in AI development arise. Smart orchestration, multi-cloud strategies, and AI-aware workload management contribute to this adaptability.

Comparison of Top AI Infrastructure Providers: Features, Pricing, and Support |

||||||

Provider |

Compute & GPUs |

Storage Solutions |

Networking |

AI/ML Tools |

Pricing Model |

Support |

|---|---|---|---|---|---|---|

AWS |

NVIDIA GPUs, custom AI chips |

S3, FSx, scalable object storage |

Direct Connect, high bandwidth |

SageMaker, broad ML frameworks |

Usage-based, reserved instances |

24/7, enterprise plans |

Google Cloud |

TPUs, NVIDIA GPUs |

Cloud Storage, persistent disks |

Premium global VPC |

Vertex AI, TensorFlow integration |

Flexible, pay-as-you-go |

Premier support, consulting |

Microsoft Azure |

NVIDIA GPUs, FPGA |

Azure Blob, Data Lake Storage |

ExpressRoute, high throughput |

Azure ML, Cognitive Services |

Hourly, reserved capacity |

24/7, advanced tier |

NVIDIA DGX Cloud |

Cutting-edge GPUs, multi-node |

Integrated AI data management |

Low-latency interconnects |

NGC catalog, optimized containers |

Subscription, enterprise-scale |

Specialized AI support |

How AI Applications and Development Flourish with the Right Infrastructure

Optimizing AI Application Performance with Next-Gen AI Infrastructure

Optimized AI infrastructure translates directly into smoother, faster, and more reliable ai application performance. When compute, storage, and networking are perfectly balanced, even the most complex ml models and real-time AI services operate without delay, hiccups, or data loss. Businesses that upgrade their infra often see reduced time to deployment, improved customer experiences, and consistent scaling no matter the size of their ai workloads.

High-performing infra also enables teams to experiment more freely, iterate on new AI models, and rapidly test new ideas—critical in industries where speed and innovation define market winners.

Accelerating AI Development: Workflow Enhancements from AI Infrastructure

Smart AI infrastructure can dramatically shorten development timelines. Automated pipelines, integrated data management, and support for favorite AI and ML frameworks help teams transition from data ingestion to final deployment with minimal friction. Secure environments, versioning, and support for containerized ai workloads mean developers spend less time managing hardware and more time building game-changing solutions.

Workflow enhancements extend to monitoring and maintenance—real-time analytics and predictive diagnostics help IT teams preempt issues before they disrupt ai application launches or ai model retraining. This raises productivity and ensures organizational resources are spent on innovation, not troubleshooting.

Case Study: High-impact AI Applications Enabled by Strong AI Infra

Consider a major healthcare provider leveraging cutting-edge AI infrastructure to speed up diagnoses and optimize patient treatments. By integrating high-speed data storage and advanced compute, their teams rapidly processed medical images, trained deep learning neural networks, and delivered diagnoses in minutes instead of days. This not only improved patient outcomes but also gave the company a lasting market edge against competitors still relying on traditional IT infrastructure.

Such success stories echo across sectors—from financial institutions automating fraud detection to logistics giants optimizing supply chains with ai applications. The secret in every case: modern, reliable, and scalable AI infrastructure.

“AI infrastructure is not just the backbone—it is the beating heart of modern enterprise innovation,” says a leading AI analyst.

Profiles: Who is the Leader in AI Infrastructure?

Current Industry Leaders in AI Infra

Today, AWS, Google Cloud Platform, Microsoft Azure, and NVIDIA dominate the ai infrastructure conversation—delivering state-of-the-art resources at global scale. Each boasts an ecosystem of tools for machine learning, ai applications, and model training. Innovative startups and cloud-native challengers are vying for niche leadership as well, especially where highly verticalized or edge-focused solutions are needed.

It’s not just size or budget that sets a leader apart. Adaptability, service reliability, continuous innovation, and customer support all play a role in making an AI infra provider stand out in a fiercely competitive market.

What Makes a Top AI Infrastructure Provider Stand Out?

A leading provider will offer more than just hardware—they deliver a comprehensive ecosystem with AI and ML tools, robust security and compliance features, seamless multi-cloud and hybrid integrations, and a portfolio designed for both legacy workload migration and next-gen innovation. Partners are also seeking transparent pricing, customizable support plans, and a clear roadmap for integrating new foundation models and generative AI features as they become available.

Flexibility (via APIs, data infrastructure integrations, and compatible AI/ML frameworks) and a commitment to ethical, sustainable, and responsible AI round out the list of must-haves.

Key Metrics Comparing Leading AI Infrastructure Companies |

|||||

Provider |

Global Coverage |

AI-Optimized Compute |

Framework Integration |

Support/SLAs |

Unique Innovations |

|---|---|---|---|---|---|

AWS |

290 regions |

Broadest GPU/AI chip selection |

All major ML, proprietary AI |

99.99% uptime, 24/7 |

SageMaker, custom silicon |

Google Cloud |

200+ regions |

Exclusive TPU clusters |

TensorFlow, Vertex AI |

Guaranteed uptime, rapid AI support |

AI Hub, AI-powered search |

Azure |

140+ regions |

FPGA + NVIDIA hybrid |

Azure ML, OpenAI integration |

Priority response, custom SLAs |

OpenAI partnership, Data Lake AI |

NVIDIA DGX |

Selective global availability |

Next-gen GPUs, multi-node |

NGC, industry toolkits |

Bespoke AI support |

Deep learning leadership |

Types and Architectures: Exploring the 4 Types of AI Systems

Narrow AI and Broad AI: Infrastructure Implications

Narrow AI (also known as weak AI) powers focused tasks like image recognition or chatbots, while Broad AI starts to approach general problem-solving skills across multiple domains. Each presents different infrastructure challenges: narrow systems can rely on more specialized hardware or on-premises clouds, but broad AI requires a flexible, scalable infra that can support diverse data sources, multiple ml models, and variable workload patterns.

Choosing between them often dictates architectural requirements—whether a company needs siloed systems for specific ai applications or a single unified infrastructure that can serve a wide variety of use cases.

General AI and Super AI: What Next for AI Infrastructure?

While true General AI (AGI) and Super AI are still theoretical, their future arrival brings profound implications for infrastructure. Anticipated AGI systems will require self-configuring, self-healing networks with extreme parallelism and the ability to process multi-modal input across vast, distributed data centers. As these systems become reality, the demands on AI infrastructure for autonomy, security, and reliability will surpass anything seen today.

Forward-looking vendors are already exploring how to build infra with greater autonomy, built-in ethical oversight, and adaptability for the rapidly evolving needs of tomorrow’s most ambitious ai models.

Watch: Explainer: Four Types of AI Systems and Their Infrastructure Requirements

The 30% Rule in AI: What It Means for AI Infrastructure

Applying the 30% Rule: Cost, Capacity, and ROI for AI Infra

The “30% Rule” is gaining traction among AI infrastructure leaders: Organizations should budget for at least a 30% increase in resources—compute, data storage, or expert staff—every time they scale up ai initiatives. This rule helps companies avoid performance drops and ensures ROI by aligning infrastructure growth with demand for new ai models and real-time ai applications.

Ignoring this guideline often leads to overloaded environments, delayed deployments, or runaway costs. Smart investment in scalable ai infra lets businesses keep up with rapidly expanding ai workloads while still maintaining predictable budgets and performance targets.

Emerging Trends and Future Directions in AI Infrastructure

Foundation Models, Generative AI, and the Future of AI Infra

The newest wave—foundation models and generative AI—demands ever more sophisticated and dynamic AI infrastructure. These tools require multi-cloud orchestration, global data access, and on-demand scaling for both training and deployment. As models grow in size and complexity, vendors are racing to deliver even more efficient, secure, and reliable infrastructure—blending cloud flexibility with next-gen on-premises accelerators.

Future direction points to hybrid AI infrastructure, multi-modal AI approaches, and privacy-first strategies—ensuring real-time learning and inference can safely occur anywhere, from the data center to the network edge.

Towards More Efficient Model Training and Inference

Efficiency is becoming the hallmark of great AI infra. Techniques like edge AI infrastructure, automated workload scheduling, and integration with cloud-native tools are reshaping what’s possible. Companies are also deploying new management tools that continuously optimize resource allocation, reduce energy consumption, and slash training times for large language models and deep learning networks.

Growing influence of edge AI infrastructure

Integration of AI infrastructure with cloud-native approaches

Rise of automated AI infrastructure management tools

Key Considerations When Choosing AI Infrastructure

Checklist: What to Look for in an AI Infrastructure Provider

Compute Power: Are there enough GPUs/AI chips to meet your needs?

Data Storage: Can the provider handle your required volumes of structured & unstructured data?

Networking: How fast is their connectivity and is it globally distributed?

Support for ML Model and Tools: Do they offer seamless integration with preferred frameworks?

Scalability: How quickly can you scale up or down as AI workloads change?

Pricing Transparency: Ask about all ongoing costs, not just initial rates.

Security & Compliance: Are there robust protections for sensitive data and regulatory requirements?

Innovation Roadmap: Does the provider invest in new features, foundation models, and generative AI support?

Security, Compliance, and Ethical AI Infrastructure

Security isn’t optional—it’s core to AI infrastructure selection. The right provider will enforce strong encryption, granular access controls, and automated compliance checks for regulatory needs. Equally critical is a commitment to ethical AI practices: transparent data use, fair model training processes, and thorough bias mitigation. As AI powers ever more sensitive applications in healthcare, finance, and beyond, organizations need partners that champion trust, privacy, and responsible innovation in every infrastructure layer.

By ensuring ethical and regulatory needs are met from day one, businesses guard against reputational and legal risks—all while fostering user trust and competitive differentiation.

People Also Ask: Core Questions About AI Infrastructure

What is the AI infrastructure?

Answer: AI infrastructure encompasses all the hardware, software, data storage, networking, and integrated systems required to design, train, deploy, and scale artificial intelligence and machine learning solutions in production environments.

Who is the leader in AI infrastructure?

Answer: Major cloud providers, such as AWS, Google Cloud, and Microsoft Azure, dominate the AI infrastructure market, with innovative startups and specialized firms like NVIDIA making significant contributions to performance and scalability.

What are the 4 types of AI systems?

Answer: The four types are Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI—each requiring tailored AI infrastructure to enable their specific functionalities and evolution.

What is the 30% rule in AI?

Answer: The 30% rule suggests organizations should expect at least a 30% resource allocation upgrade—whether in compute, storage, or human capital—when scaling AI initiatives, guiding strategic AI infrastructure investments.

FAQs on AI Infrastructure

How does AI infrastructure differ from standard IT infrastructure?

AI infrastructure is purpose-built for processing and training complex AI and ML models, supporting high-volume data workloads and parallel processing, while standard IT infrastructure is designed for general business operations.What role does AI infrastructure play in data management?

AI infrastructure empowers seamless data collection, storage, and access, enabling organizations to manage massive volumes of structured and unstructured data for AI-driven insights and applications.Can AI infrastructure support real-time AI applications?

Yes, modern AI infrastructure can deliver real-time inference and responses, supporting applications like chatbots, autonomous vehicles, and fraud detection with minimal lag.What are emerging challenges in AI infrastructure management?

Organizations face challenges such as scaling efficiently, managing energy use, ensuring data security, and keeping up with rapidly evolving AI and ML frameworks.

Key Takeaways for Selecting the Right AI Infrastructure

AI infrastructure ensures the reliable, scalable delivery of AI application performance

Provider selection should weigh compute power, data storage, ML model support, and cost

Machine learning and deep learning algorithms thrive only on efficient AI infra

Generative AI, foundation models, and new AI applications rely on modern, agile infrastructure

Ongoing innovations continue to reshape the strategic AI infra landscape

Final Thoughts: Transform Your Strategy with Future-Ready AI Infrastructure

Powerful, strategic AI infrastructure isn’t just a technical requirement—it’s the key to unlocking business innovation, efficiency, and lasting market leadership.

As you continue to refine your approach to AI infrastructure, remember that the broader context—ranging from policy changes to workforce trends—can have a profound effect on technology adoption and industry growth. Exploring how political actions shape sectors like offshore wind energy can offer valuable lessons for anticipating challenges and seizing opportunities in the AI space. For a deeper understanding of how external forces can influence innovation and job creation, consider reading about why political actions threaten offshore wind jobs in America. Gaining this perspective will help you future-proof your tech strategy and stay ahead in a rapidly evolving digital landscape.

Ready to Unlock More? Check Out the Reach Solar Review for Cutting-Edge Tech Insights

Take your tech strategy further—Check Out the Reach Solar Review: https://reachsolar. com/seamandan/#about

Sources

IDC – https://www.idc.com/getdoc.jsp?containerId=prAP48405522

AWS Machine Learning – https://aws.amazon.com/machine-learning/

Google Cloud AI Solutions – https://cloud.google.com/solutions/ai

Microsoft Azure AI – https://azure.microsoft.com/en-us/explore/ai/

NVIDIA DGX Cloud – https://www.nvidia.com/en-us/data-center/dgx-cloud/

Harvard Business Review – https://hbr.org/2023/03/ai-infrastructure-strategies

To deepen your understanding of AI infrastructure, consider exploring the following authoritative resources: “AI Infrastructure | Google Cloud”: This resource provides insights into scalable, high-performance, and cost-effective infrastructure tailored for various AI workloads. (cloud. google. com) “What Is AI Infrastructure? | NVIDIA Glossary”: This glossary entry offers a comprehensive definition of AI infrastructure, detailing its components and their roles in supporting AI models and applications. (nvidia. com) These resources will provide you with a solid foundation in AI infrastructure, covering its components, functionalities, and the latest advancements in the field.

Ready to Make a Change? Check Out the Reach Solar Review: https://reachsolar.com/seamandan/#about

Write A Comment